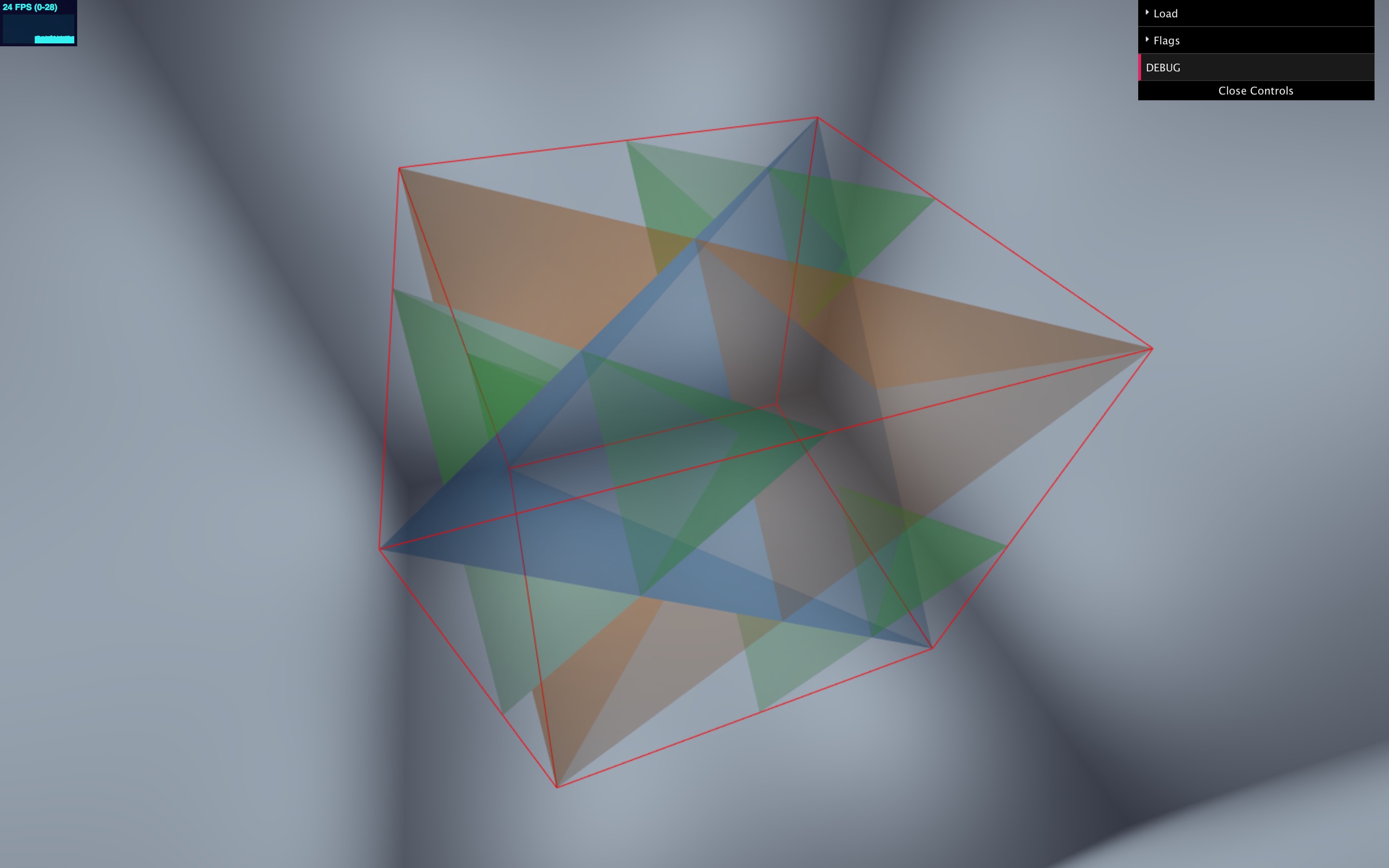

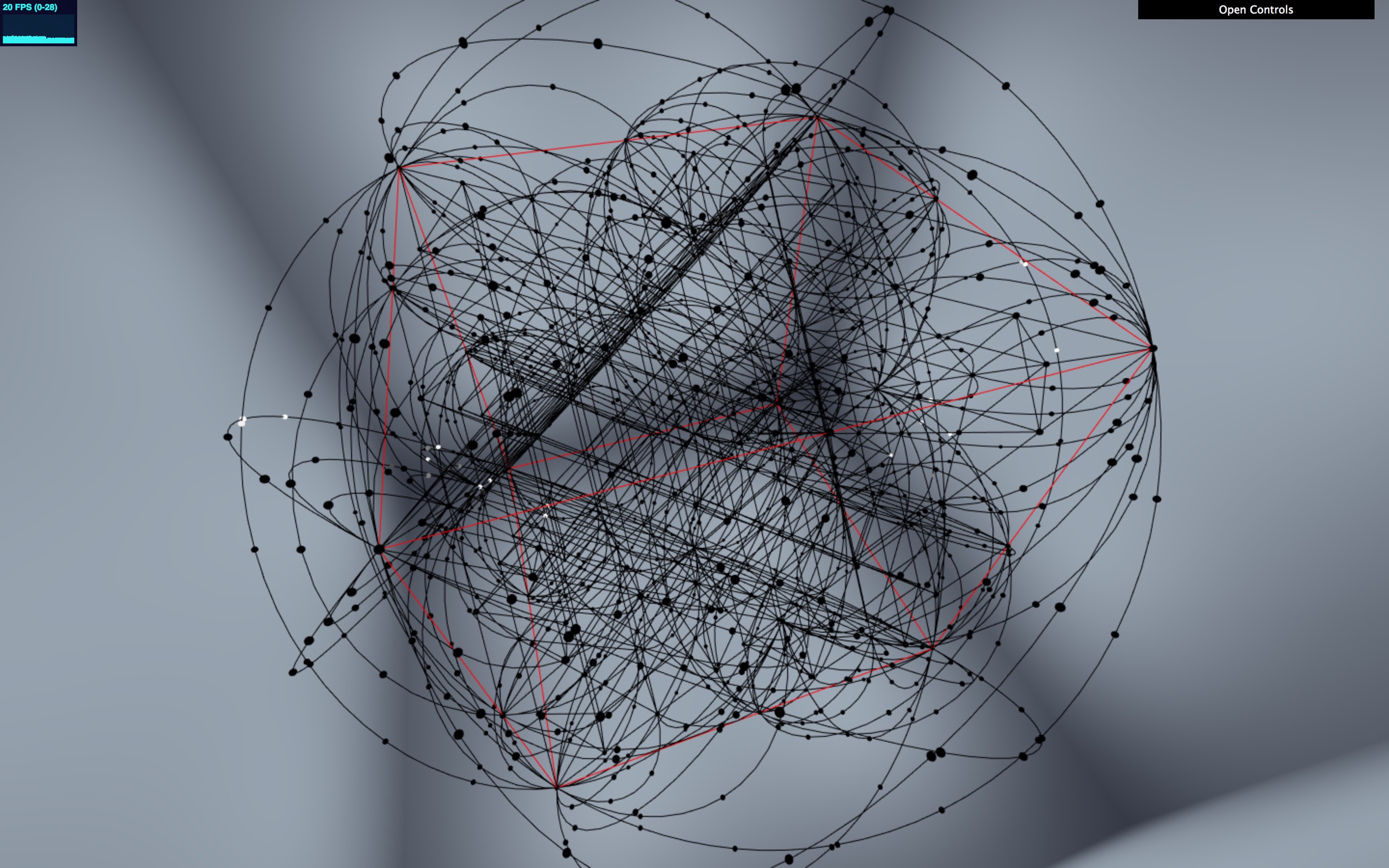

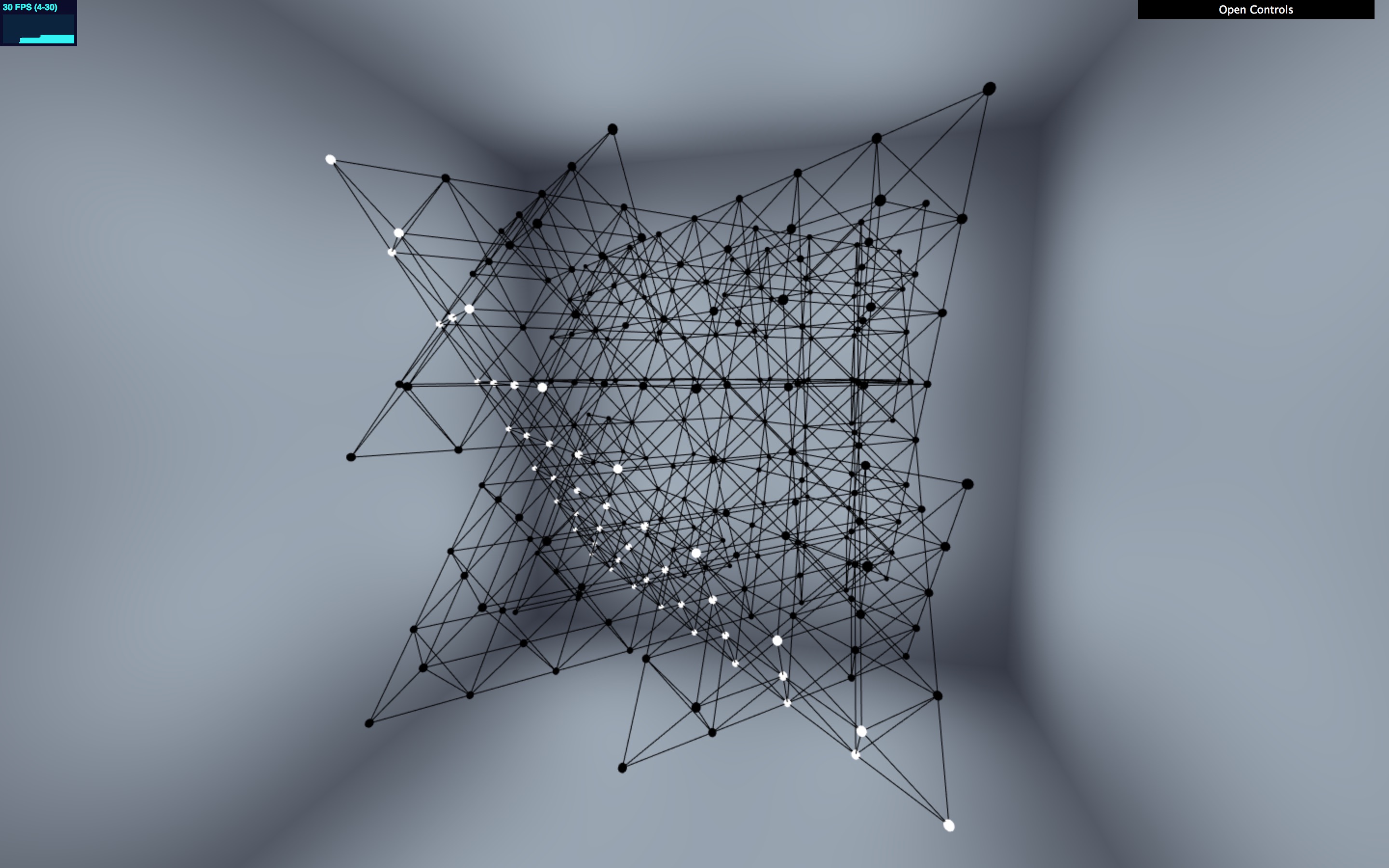

The Holohedron is a fixed, sparse, three-dimensional form that needs to be able to display a variety of phenomena, many of which don’t relate directly to the Holohedron shape. Some of the phenomena (blood flow) are three-dimensional, while others (ferromagnetism) are not. The central core of the Holohedron is formed of two regular tetrahedra, one of which is stellated (see the image above), but around this core there is a lot more detail in the form of circumscribed arcs at various scales, mapped out as in this image:

So, how to reconcile an assortment of 2D and 3D processes with such a complex physical form? We could take a decent stab at the ferromagnetism model, which operates on a triangular grid, and hard-wire its outputs to whichever triangular face we like, but that doesn’t help us with models which operate in a three-dimensional Cartesian space, and if we did manage to come up with acceptable implementations for our models we’d be faced with a complex process of combining them on the same display hardware.

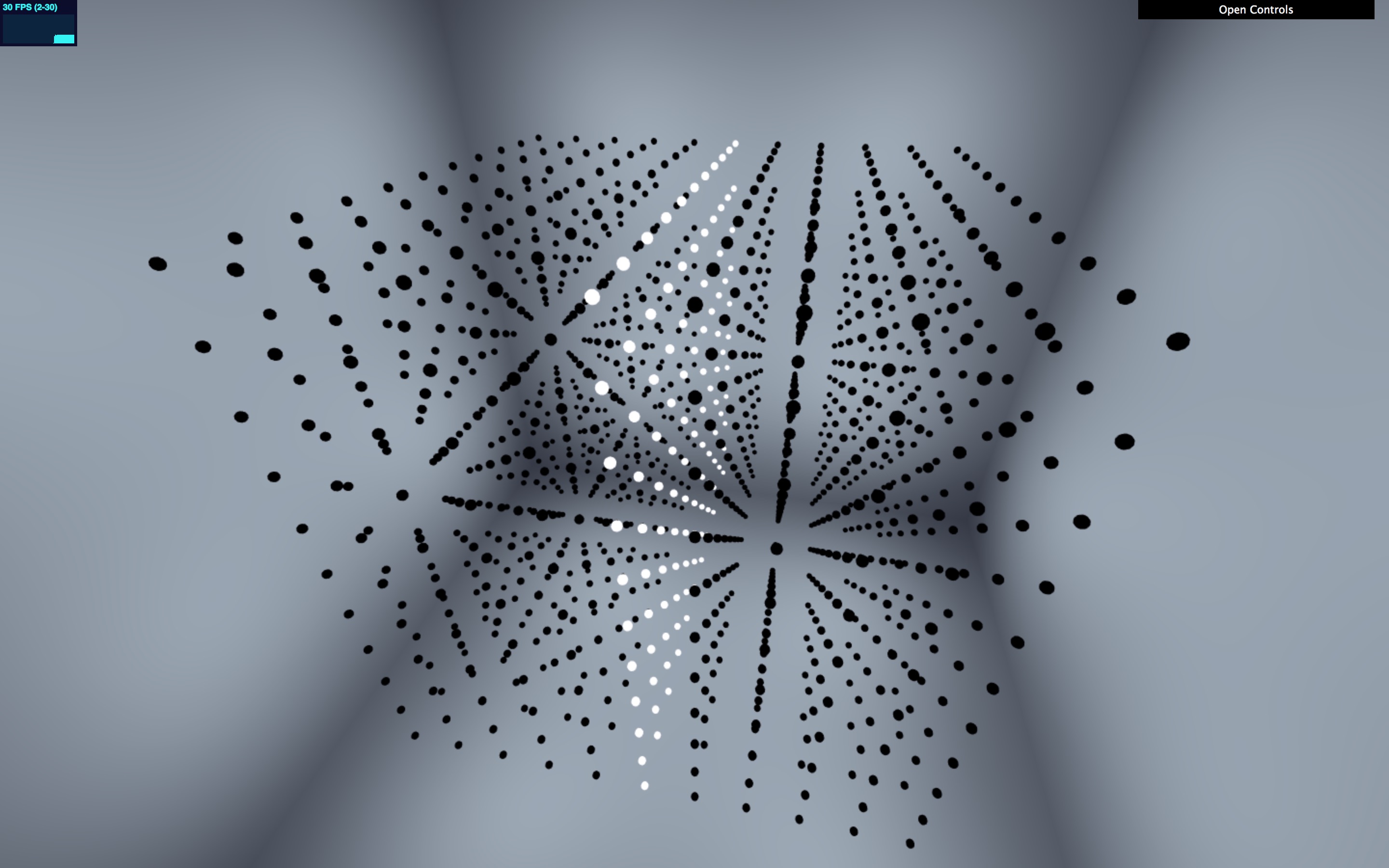

There’s a better, more general approach, informed by the way we built Plenum (although it’s a fairly obvious approach and has no doubt been used in digital art elsewhere: I refer the interested reader to scalar fields). Any displayed pattern on a canvas of discrete points is implemented as a function from a point’s position to the displayed value. In the image below, the display function maps point position \( (x, y) \) to point size, so even though the points are arranged irregularly, and are constantly moving, visual artifacts like the concentric rings remain in a stable position:

Such scalar field functions have numerous advantages:

- they are compact (compared to almost any representation of the data points)

- they are impervious to changes in the display geometry

- they can be mapped and composed in numerous ways to generate new functions (and we can build higher-order combinators over them)

- we can produce time-based animations by adding a further parameter \( t \) representing time

Obviously, we could use vector field functions (returning \( (r, g, b) \)) if we were working in colour, and also build combinators which mapped between full RGB, monochrome, or arbitrary colour gradients. (We have a varied selection of scalar and vector functions in the Cosmoscope code base.)

So: the Holohedron operates via combinators over scalar functions in \( (x, y, z, t) \), and we have a variety of test animations, such as those using trigonometry to rotate visual elements, or produce periodic animation patterns (with trigonometry over \( t \)). That gets us from a “model” parameter space operating in three-dimensional Cartesian space (and probably implemented in Emscripten, as we described earlier) to the projector surfaces on the Holohedron.

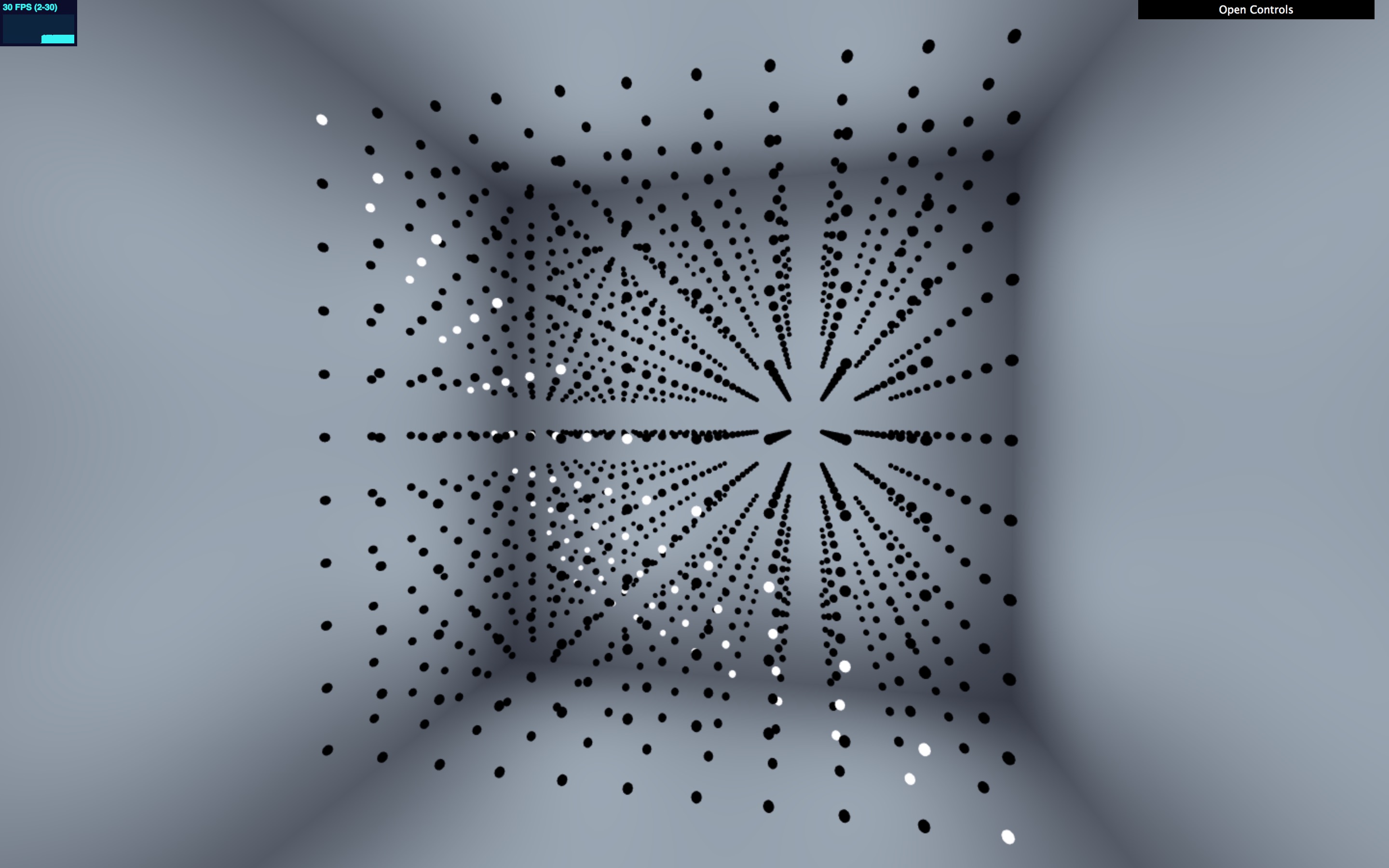

But suppose we really do want to draw things accurately on one of the triangular faces? Well, we can do that by doing our drawing in Cartesian space, in one plane (with, say, \( z = 0 \) for simplicity) and then shifting the appropriate part of the plane into the coordinate system of the Holohedron itself using an affine transformation. Here is an example: we have a generator function which lights up the X-Y plane, by returning \( 1 \) for all points very close to \( z = 0 \) and \( 0 \) otherwise. To illustrate that, we’ll replace the Holohedron form with a 3D Cartesian point grid:

Now we need to shift this generator function from the \( z = 0 \) plane to a plane which coincides with a face of the Holohedron. Here’s what that looks like if we keep the Cartesian grid in place:

And now let’s swap the Cartesian grid out and put the Holohedron back:

And here’s the code:

(def tetra-plane

(gx/affine-generator [[-1 -1 1] [1 -1 -1] [-1 1 -1]]

[[-1 -1 0] [1 1 0] [1 -1 0]]

(gx/z-proximal-generator 0

(gx/wrap-basis-fn 0 (fn [x y z t] 1.0)))))Let’s read that from the inside out. (fn [x y z t] 1.0) is the initial generator which just outputs white (1.0) everywhere. The z-proximal-generator transforms that into a generator which puts out its value of 1.0 near the \( z = 0 \) plane and fades it to zero away from that plane. Then the affine-generator shifts the display. Anything we look at on the plane [-1 -1 1] [1 -1 -1] [-1 1 -1] (the Holohedron tetrahedral plane) will be calculated by looking at the enclosed generator on the plane [-1 -1 0] [1 1 0] [1 -1 0], which you can see is specified by a triangle of three distinct points where \( z = 0 \).

We’ll look at the affine mapping in another post, but we should explain the wrap-basis-fn call. The functions over \( (x, y, z, t) \) which we’ve called “generators” are better described as “basis functions”. (That’s not an accurate use of the mathematical term, but we stole the usage from Jitter.) Once we started working in Emscripten, it became clear that we’d probably be working with generators which have a non-trivial iteration from one time interval to the next (and possibly side-effecting too). An obvious example of this is Game of Life, which of course we were compelled to implement. So, it made sense to separate the advance of time from the “sampling” operation at \( (x, y, z) \). Hence, generators. This is the protocol:

(defprotocol GENERATOR

"Generator form which separates time progression from x/y/z sampling."

(locate [this t]

"Locate to time `t`, return new state. (For Emscripten generators,

may well just side-effect.)")

(sample [this x y z]

"Sample state at current time and `(x, y, z)`. Return single value

or RGB triple."))It’s trivial to take a basis function over \( (x, y, z, t) \) and turn it into a generator:

(defn wrap-basis-fn

"Wrap a simple `(x, y, z, t)` basis function into a `GENERATOR` form."

[t f]

(reify GENERATOR

(locate [this t']

(wrap-basis-fn t' f))

(sample [this x y z]

(f x y z t))))And so to the money shots. We have a Game of Life implementation, which plays Life in the X-Y plane while scrolling successive generations through Z. Rendered into our Cartesian grid, it looks like this:

(The reason that some of the points are grey is that for some reason we set up Game of Life to running with a grid size of 9 but a pixel grid has size 11, so the renderer is interpolating.)

How do we get this onto a tetrahedron?

- (i) extract one slice (generation) of the game, using the Z proximal generation mentioned above;

- (ii) cut this slice along a diagonal, resulting in two right-angled triangles;

- (iii) affine-map each triangle to a face of the tetrahedron, making sure that the original hypotenuse edges are reattached on a single tetrahedron edge.

This is the result: